GP107 會有雙版本,NVIDIA GeForce GTX 1050 Ti 4GB 與 GeForce GTX 1050 2GB

https://translate.googleusercontent...52016/&usg=ALkJrhiEbmXwEoZqZlzjMkDhOHAQIOsiFg

https://translate.googleusercontent...52016/&usg=ALkJrhiEbmXwEoZqZlzjMkDhOHAQIOsiFg

GTX 1050 Ti mid Oct, GTX 1050 late Oct, according to HWBattleDo we have a paper launch or launch date for these yet?

WCCFTech said:The refined 16nm process is meant to offer even higher clock speeds and further stability on the Pascal GPUs. We can see the new chips clocking close to 2 GHz and maintaining those speeds under maximum load. Furthermore, GDDR5X has been a main issue for NVIDIA. Micron is under pressure to offer better yields of GDDR5X chips and that has gotten better over the while.

[…]

[At GTC 2017,] Jen-Hsun is also expected to showcase an updated NVIDIA GPU roadmap which will include new codenames and tech details for future chips. NVIDIA is expected to drop 10nm process and go straight for 7nm with their post-Volta GPUs, supporting HBM3 and GDDR6 memory standards.

From WCCFTech: "NVIDIA Rumored To Release Pascal Refresh With GDDR5X and Faster Clocks – Volta To Feature HBM2 and GDDR6 Support, 16 GB Standard Capacity [for a 256-bit bus]."

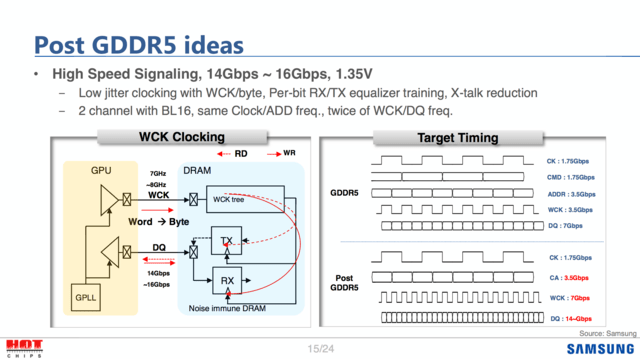

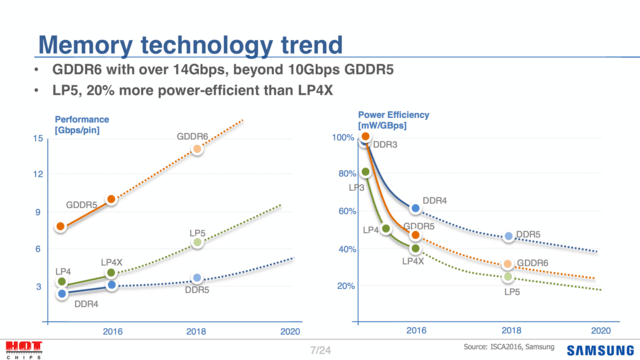

Do we know anything about GDDR6? Superficially, it seems like a refreshed GDDR5X, but there's got to be something deeper than just that.

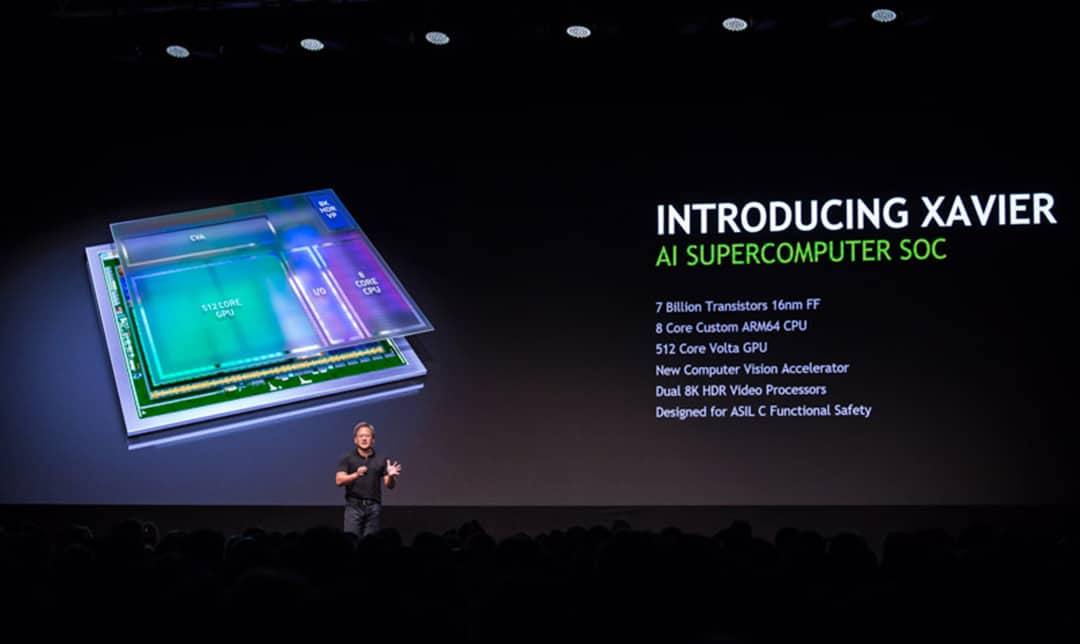

Slightly unrelated to Pascal but Volta based SoC for DrivePX series announced

https://blogs.nvidia.com/blog/2016/09/28/gtc-europe-keynote/?__prclt=Ph225I5z

Neat find.

To save others the effort, see the pertinent slide below.

512 "cores" doesn't seem like a lot for a gpu, but there could be some additional nuance in play.

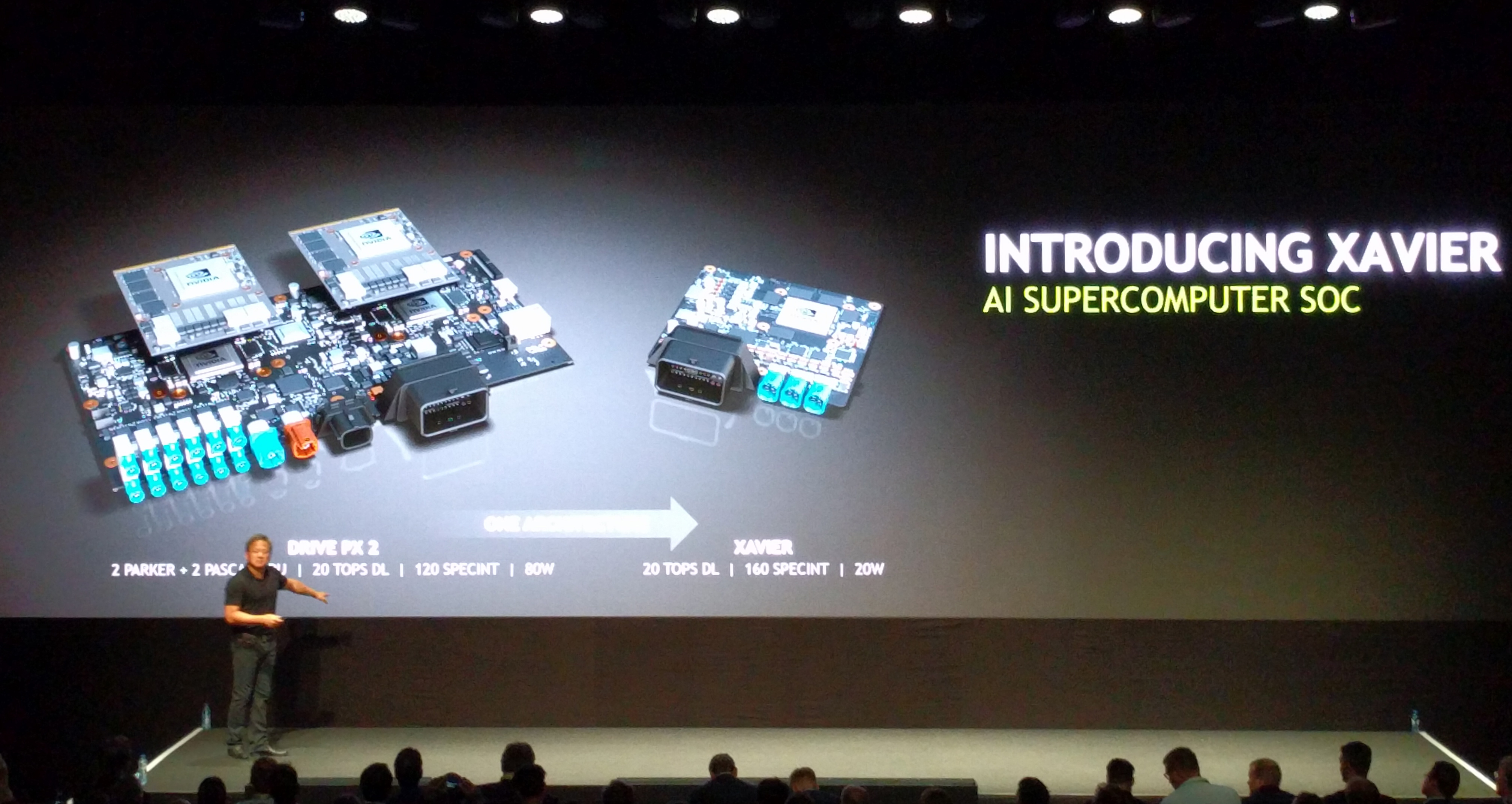

It's on a separate slide (referenced by AnandTech).Btw I saw 20TOP/s claimed for this SoC but now I can't find the claim lol

AnandTech said:With Xavier, NVIDIA wants to get to 20 Deep Learning Tera-Ops (DL TOPS), which is a metric for measuring 8-bit Integer operations. 20 DL TOPS happens to be what Drive PX 2 can hit, and about 43% of what NVIDIA’s flagship Tesla P40 can offer in a 250W card. And perhaps more surprising still, NVIDIA wants to do this all at 20W, or 1 DL TOPS-per-watt, which is one-quarter of the power consumption of Drive PX 2, a lofty goal given that this is based on the same 16nm process as Pascal and all of the Drive PX 2’s various processors.

Did nvidia ever get to the 10x maxwell performance in pascal?

It was actually 10x faster at training AlexNet and yes they did achieve that (almost)The 10x was a invented metrics... lately Nvidia do slides as they could do it if they was in the smartphone market.. 2x more Vram bandwith, 2x performance / wattts, 4x performance at FP16 + 2x more Vram capacity = 10x more performance ( 2+2+4+2 )

It was actually 10x faster at training AlexNet and yes they did achieve that (almost)

https://blogs.nvidia.com/blog/2015/03/17/pascal/

That is what they said but where does it actually show any tests or benchmarks? As far as I can tell they put nvlink as the reason it somehow gets 10x instead of 5x which makes me wonder if they are comparing 2 GPUs vs 1 because their half precision performance doesn't go up nearly 10x on paper.It was actually 10x faster at training AlexNet and yes they did achieve that (almost)

https://blogs.nvidia.com/blog/2015/03/17/pascal/

That 10x number includes a doubling in the number of GPUs due to NVLink. The part of the slide where NVLink is mentioned is cut off in the picture in the blog.That is what they said but where does it actually show any tests or benchmarks? As far as I can tell they put nvlink as the reason it somehow gets 10x instead of 5x which makes me wonder if they are comparing 2 GPUs vs 1 because their half precision performance doesn't go up nearly 10x on paper.

That is what they said but where does it actually show any tests or benchmarks? As far as I can tell they put nvlink as the reason it somehow gets 10x instead of 5x which makes me wonder if they are comparing 2 GPUs vs 1 because their half precision performance doesn't go up nearly 10x on paper.

That 10x number includes a doubling in the number of GPUs due to NVLink. The part of the slide where NVLink is mentioned is cut off in the picture in the blog.