You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

AMD RyZen CPU Reviews

- Thread starter Ike Turner

- Start date

-

- Tags

- amd

After all the hysteria I am a bit surprised when I look at the gaming benchmarks:

http://www.pcgameshardware.de/Ryzen-7-1800X-CPU-265804/Tests/Test-Review-1222033/

Runs worse than a FX 9590 in Far Cry 4 and Witcher 3.

http://www.pcgameshardware.de/Ryzen-7-1800X-CPU-265804/Tests/Test-Review-1222033/

Runs worse than a FX 9590 in Far Cry 4 and Witcher 3.

Last edited:

Ike Turner

Veteran

Shocking: Ryzen losses in benchmarks running at 720P on poorly multithreaded engines..vs the Intel CPU which cost 2x as much...After all the hysteria I am a bit surprised when I look at the game benchmarks:

http://www.pcgameshardware.de/Ryzen-7-1800X-CPU-265804/Tests/Test-Review-1222033/

Runs really bad in Far Cry 4 and Witcher 3.

Anyone who buys a Ryzen 7 config to play games in CPU bound scenarios shouldn't be allowed near a PC ever again anyway...

DavidGraham

Veteran

What about Ahes? the 7700K is ahead by a decent margin, let alone the 6900K!

https://www.computerbase.de/2017-03...4/#diagramm-ashes-of-the-singularity-dx12-fps

https://www.computerbase.de/2017-03...4/#diagramm-ashes-of-the-singularity-dx12-fps

For me it looks like Ryzen does not even beat a current i5 which is completely disappointing in my opinion. The application performance is partly really great and partly bad. Ryzen does not seem to be finished yet.

I just don't understand why AMD makes such a huge hype "fastest 8 core processor" and then they are delivering this.

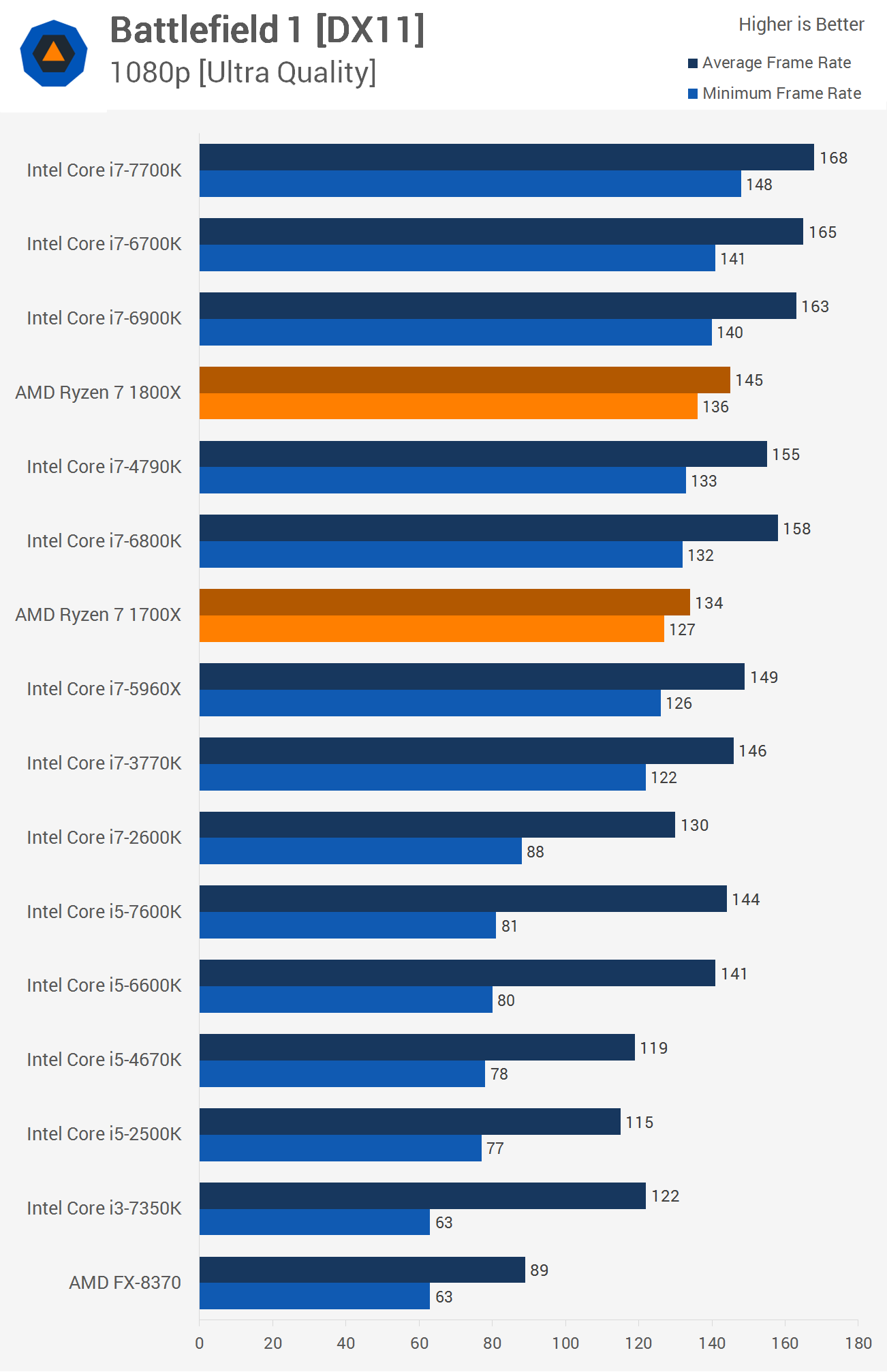

Battlefield 1 seems to run best.

I just don't understand why AMD makes such a huge hype "fastest 8 core processor" and then they are delivering this.

Battlefield 1 seems to run best.

Ike Turner

Veteran

Well, none of these engines are optimized to fully leverage 8 CPU cores (or even 16 threads..) so it's no surprise that 4cores/8threads CPUs are still better suited for the current crop of PC games. Nothing surprising at all here. I have an i6700K and I'm sure as hell not going to switch to Ryzen CPU anytime soon. Doesn't mean that they are disappointing at all. They are totally destroying intel in multi-threaded performance for half the price...What about Ahes? the 7700K is ahead by a decent margin, let alone the 6900K!

https://www.computerbase.de/2017-03...4/#diagramm-ashes-of-the-singularity-dx12-fps

Looks like SMT is at least a cause of the weird gaming results:

http://www.gamersnexus.net/hwreview...review-premiere-blender-fps-benchmarks/page-7

http://www.gamersnexus.net/hwreview...review-premiere-blender-fps-benchmarks/page-7

Total Warhammer shows the biggest change in performance when disabling SMT. The AMD R7 1800X moves from ~127FPS AVG (1% low: 90, 0.1% low: 65.7) to ~153FPS AVG with SMT0. That’s an increase in performance of 20.5% by disabling AMD’s most advertised property. In the 1% and 0.1% low values, AMD moves from 90 to 117.3FPS (1% low) and from 65.7 to 101.7FPS (0.1% low), indicating further that SMT hamstrings frame latency significantly.

Last edited:

Really solid review by CB: https://www.computerbase.de/2017-03/amd-ryzen-1800x-1700x-1700-test/4/ (looks like they also had 4 guys on it...)

At least Toms the and ComputerBase.

Something is b0rked there - look at eg. the CB15 mt score - half of other places.After all the hysteria I am a bit surprised when I look at the gaming benchmarks:

http://www.pcgameshardware.de/Ryzen-7-1800X-CPU-265804/Tests/Test-Review-1222033/

Have any reviews compared SMT on and off performance?

At least Toms the and ComputerBase.

DavidGraham

Veteran

Not even this one:Battlefield 1 seems to run best.

That sounds well, but the 6900K (8C/16T) is ahead by 20% in this very title as well!Well, none of these engines are optimized to fully leverage 8 CPU cores (or even 16 threads..) so it's no surprise that 4cores/8threads CPUs are still better suited for the current crop of PC games.

Last edited:

Ike Turner

Veteran

That sounds well, but the 6900K (8C/16T) is ahead by 20% in this very title as well!

And is...100% more expensive.. Let's not get ahead of ourselves should we? The i6900K is twice the price....is equal or slower that the 500$ 1800X in MT workloads (and sometimes gets totally destroyed)...but is 20% in CPU game benchmarks. No big deal in the grand schemes of things..

Edit: And yeah there are definitely some issues with SMT based on all the results coming in. IIRC apparently AMD contacted reviewers yesterday asking them to run benches with/without SMT enabled.

BF1 *multiplayer* (always considerably more cpu heavy and threaded):

Also see these notes about early bios versions affecting performance a lot (up to 25% between the asus and msi board):

https://translate.google.com/translate?sl=auto&tl=en&js=y&prev=_t&hl=en&ie=UTF-8&u=https://www.computerbase.de/2017-03/amd-ryzen-1800x-1700x-1700-test/2/#abschnitt_mainboards_mit_startschwierigkeiten&edit-text=

I would also expect these clock/state problems to mainly affect games - ie where the cores are not at 100% all the time.

Also see these notes about early bios versions affecting performance a lot (up to 25% between the asus and msi board):

https://translate.google.com/translate?sl=auto&tl=en&js=y&prev=_t&hl=en&ie=UTF-8&u=https://www.computerbase.de/2017-03/amd-ryzen-1800x-1700x-1700-test/2/#abschnitt_mainboards_mit_startschwierigkeiten&edit-text=

I would also expect these clock/state problems to mainly affect games - ie where the cores are not at 100% all the time.

Last edited:

Although if the problems were easily fixable surely AMD would have just delayed the reviews.Also see these notes about early bios versions affecting performance a lot (up to 25% between the asus and msi board):

https://translate.google.com/translate?sl=auto&tl=en&js=y&prev=_t&hl=en&ie=UTF-8&u=https://www.computerbase.de/2017-03/amd-ryzen-1800x-1700x-1700-test/2/#abschnitt_mainboards_mit_startschwierigkeiten&edit-text=

I would also expect these clock/state problems to mainly affect games - ie where the cores are not at 100% all the time.

Ike Turner

Veteran

They can't really delay the reviews given that the product is launching to today/tomorrow..Although if the problems were easily fixable surely AMD would have just delayed the reviews.

gamervivek

Regular

SMT is also causing trouble in some games,

http://www.hardware.fr/articles/956-7/impact-smt-ht.html

For the hundredth time, AMD need to launch with better drivers.

Any virtualization benchmarks?

http://www.hardware.fr/articles/956-7/impact-smt-ht.html

For the hundredth time, AMD need to launch with better drivers.

Any virtualization benchmarks?

hoom

Veteran

Looks pretty good to me

Quite a lot of stuff where its well up there with 8core intel clock for clock, where it falls behind its often still beating the higher clocked 4core intel.

Definitely bits where its still well behind both though.

But in everything vastly better than previous AMD CPUs.

*hoping there will be a 4.5Ghz & reasonably priced 4core somewhere down the line*

Intel gets performance hits from SMT too...

I kinda dream of OS level ability to switch SMT on/off based on load/app preference (though I seem to recall Windows can/does de-load the 2nd thread?)

Quite a lot of stuff where its well up there with 8core intel clock for clock, where it falls behind its often still beating the higher clocked 4core intel.

Definitely bits where its still well behind both though.

But in everything vastly better than previous AMD CPUs.

*hoping there will be a 4.5Ghz & reasonably priced 4core somewhere down the line*

Eh? Its a CPU not a GPU.For the hundredth time, AMD need to launch with better drivers.

Intel gets performance hits from SMT too...

I kinda dream of OS level ability to switch SMT on/off based on load/app preference (though I seem to recall Windows can/does de-load the 2nd thread?)

DavidGraham

Veteran

gamersnexus outlined the "tricks?" used by AMD in benchmarking Ryzen before the lanuch:

AMD inflated their numbers by doing a few things:

In the Sniper Elite demo, AMD frequently looked at the skybox when reloading, and often kept more of the skybox in the frustum than on the side-by-side Intel processor. A skybox has no geometry, which is what loads a CPU with draw calls, and so it’ll inflate the framerate by nature of testing with chaotically conducted methodology. As for the Battlefield 1 benchmarks, AMD also conducted using chaotic methods wherein the AMD CPU would zoom / look at different intervals than the Intel CPU, making it effectively impossible to compare the two head-to-head.

And, most importantly, all of these demos were run at 4K resolution. That creates a GPU bottleneck, meaning we are no longer observing true CPU performance. The analog would be to benchmark all GPUs at 720p, then declare they are equal (by way of tester-created CPU bottlenecks). There’s an argument to be made that low-end performance doesn’t matter if you’re stuck on the GPU, but that’s a bad argument: You don’t buy a worse-performing product for more money, especially when GPU upgrades will eventually out those limitations as bottlenecks external to the CPU vanish.

As for Blender benchmarking, AMD’s demonstrated Blender benchmarks used different settings than what we would recommend. The values were deltas, so the presentation of data is sort of OK, but we prefer a more real-world render. In its Blender testing, AMD executes renders using just 150 samples per pixel, or what we consider to be “preview” quality (GN employs a 3D animator), and AMD runs slightly unoptimized 32x32 tile sizes, rendering out at 800x800. In our benchmark, we render using 400 samples per pixel for release candidate quality, 16x16 tiles, which is much faster for CPU rendering, and a 4K resolution. This means that our benchmarks are not comparable to AMD’s, but they are comparable against all the other CPUs we’ve tested. We also believe firmly that our benchmarks are a better representation of the real world. AMD still holds a lead in price-to-performance in our Blender benchmark, even when considering Intel’s significant overclocking capabilities (which do put the 6900K ahead, but don’t change its price).

As for Cinebench, AMD ran those tests with the 6900K platform using memory in dual-channel, rather than its full quad-channel capabilities. That’s not to say that the results would drastically change, but it’s also not representative of how anyone would use an X99 platform.

Conclusion:

Regardless, Cinebench isn’t everything, and neither is core count. As software developers move to support more threads, if they ever do, perhaps AMD will pick up some steam – but the 1800X is not a good buy for gaming in today’s market, and is arguable in production workloads where the GPU is faster. Our Premiere benchmarks complete approximately 3x faster when pushed to a GPU, even when compared against the $1000 Intel 6900K. If you’re doing something truly software accelerated and cannot push to the GPU, then AMD is better at the price versus its Intel competition. AMD has done well with its 1800X strictly in this regard. You’ll just have to determine if you ever use software rendering, considering the workhorse that a modern GPU is when OpenCL/CUDA are present. If you know specific in stances where CPU acceleration is beneficial to your workflow or pipeline, consider the 1800X.

For gaming, it’s a hard pass. We absolutely do not recommend the 1800X for gaming-focused users or builds, given i5-level performance at two times the price. An R7 1700 might make more sense, and we’ll soon be testing that.

http://www.gamersnexus.net/hwreview...review-premiere-blender-fps-benchmarks/page-8

AMD inflated their numbers by doing a few things:

In the Sniper Elite demo, AMD frequently looked at the skybox when reloading, and often kept more of the skybox in the frustum than on the side-by-side Intel processor. A skybox has no geometry, which is what loads a CPU with draw calls, and so it’ll inflate the framerate by nature of testing with chaotically conducted methodology. As for the Battlefield 1 benchmarks, AMD also conducted using chaotic methods wherein the AMD CPU would zoom / look at different intervals than the Intel CPU, making it effectively impossible to compare the two head-to-head.

And, most importantly, all of these demos were run at 4K resolution. That creates a GPU bottleneck, meaning we are no longer observing true CPU performance. The analog would be to benchmark all GPUs at 720p, then declare they are equal (by way of tester-created CPU bottlenecks). There’s an argument to be made that low-end performance doesn’t matter if you’re stuck on the GPU, but that’s a bad argument: You don’t buy a worse-performing product for more money, especially when GPU upgrades will eventually out those limitations as bottlenecks external to the CPU vanish.

As for Blender benchmarking, AMD’s demonstrated Blender benchmarks used different settings than what we would recommend. The values were deltas, so the presentation of data is sort of OK, but we prefer a more real-world render. In its Blender testing, AMD executes renders using just 150 samples per pixel, or what we consider to be “preview” quality (GN employs a 3D animator), and AMD runs slightly unoptimized 32x32 tile sizes, rendering out at 800x800. In our benchmark, we render using 400 samples per pixel for release candidate quality, 16x16 tiles, which is much faster for CPU rendering, and a 4K resolution. This means that our benchmarks are not comparable to AMD’s, but they are comparable against all the other CPUs we’ve tested. We also believe firmly that our benchmarks are a better representation of the real world. AMD still holds a lead in price-to-performance in our Blender benchmark, even when considering Intel’s significant overclocking capabilities (which do put the 6900K ahead, but don’t change its price).

As for Cinebench, AMD ran those tests with the 6900K platform using memory in dual-channel, rather than its full quad-channel capabilities. That’s not to say that the results would drastically change, but it’s also not representative of how anyone would use an X99 platform.

Conclusion:

Regardless, Cinebench isn’t everything, and neither is core count. As software developers move to support more threads, if they ever do, perhaps AMD will pick up some steam – but the 1800X is not a good buy for gaming in today’s market, and is arguable in production workloads where the GPU is faster. Our Premiere benchmarks complete approximately 3x faster when pushed to a GPU, even when compared against the $1000 Intel 6900K. If you’re doing something truly software accelerated and cannot push to the GPU, then AMD is better at the price versus its Intel competition. AMD has done well with its 1800X strictly in this regard. You’ll just have to determine if you ever use software rendering, considering the workhorse that a modern GPU is when OpenCL/CUDA are present. If you know specific in stances where CPU acceleration is beneficial to your workflow or pipeline, consider the 1800X.

For gaming, it’s a hard pass. We absolutely do not recommend the 1800X for gaming-focused users or builds, given i5-level performance at two times the price. An R7 1700 might make more sense, and we’ll soon be testing that.

http://www.gamersnexus.net/hwreview...review-premiere-blender-fps-benchmarks/page-8

Similar threads

- Replies

- 220

- Views

- 87K