You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Are these 2 API's being actively supported in Games ?

- Thread starter Davros

- Start date

DavidGraham

Veteran

Recently Metro Exdous supported PhysX. But the general support has slowed down to a crawl.Are devs still releasing games that use them or has Physx been replaced by something else (direct compute maybe?)

Yes.and Amd's TruSound just abandoned ?

Recently Metro Exdous supported PhysX. But the general support has slowed down to a crawl.

But with both UE and Unity using PhysX, it has wider support then ever before. It more seems like Havok is a rare exception nowadays?

DavidGraham

Veteran

Yeah PhysX as a middleware is widely used and optimized for nowadays, it's featured in most engines, however I assumed Davros was asking about the hardware accelerated PhysX effects, and Metro Exodus was the last game to support them.

Hmm... having no PhysX experience it always wonders me how far the 'hardware acceleration' goes exactly.I assumed Davros was asking about the hardware accelerated PhysX effects

Speaking of UE and Unity, i was assuming things like cloth simulation run on GPU and this is exposed - but i may be wrong, because how's it for AMD then? Likely they have their own cloth implemetation not using PhysX?

Fluid / smoke simulation is still very rare in games, so likely not exposed, and likely it's the same for GPU rigid body simulation for massive destruction?

Would be interesting if anybody knows details...

BTW, when talking with other devs it often seems even essential features unrelated to GPU are not exposed. Things like callbacks to adjust contact velocity, or robotic joints for example. But i don't know if those limitations are just about missing easy editur UI or indeed about missing API support.

DavidGraham

Veteran

They are exposed and run on the CPU. NVIDIA made the latest SDK open source too. So you can theoretically run them on AMD GPUs as well.Speaking of UE and Unity, i was assuming things like cloth simulation run on GPU and this is exposed - but i may be wrong, because how's it for AMD then? Likely they have their own cloth implemetation not using PhysX?

Those are GPU accelerated on NVIDIA GPUs.Fluid / smoke simulation is still very rare in games, so likely not exposed,

YesI assumed Davros was asking about the hardware accelerated PhysX effects,

Hmm... having no PhysX experience it always wonders me how far the 'hardware acceleration' goes exactly.

Speaking of UE and Unity, i was assuming things like cloth simulation run on GPU and this is exposed - but i may be wrong, because how's it for AMD then?

In the games I remember on amd there is no or little cloth this maybe because nvidia did the adding of physx effects themselves and wanted to make physx really stand out

Fluid / smoke simulation is still very rare in games, so likely not exposed,

It is and it's done in physx

Fluid / smoke simulation is still very rare in games, so likely not exposed, and likely it's the same for GPU rigid body simulation for massive destruction?

not in the games I remember eg: ut3 and ghost recon future soldier

Dont know about now but nvidia had a bad reputation for not doing any optimisation for cpu phsx

eg: it was using x87 code

pps: there was a hack that allowed you to use an amd card for rendering and a nvidia card for physx.

And will amd be doing this?So you can theoretically run them on AMD GPUs as well.

Last edited:

Not sure about that, because on gitHub i can only find a memory manager for Cuda, but no Cuda code or gfx API compute shaders. https://github.com/NVIDIAGameWorks/PhysX/tree/4.1/physxNVIDIA made the latest SDK open source too. So you can theoretically run them on AMD GPUs as well.

Maybe the GPU stuff is now all part of Apex? Apex has features that are interesting for GPU, but also it seems more of a framework to make integration easier, so i don't think so. (https://www.nvidia.com/object/apex.html)

So either i'm just unable to find the GPU sources, or they are still closed source and provided only by a dll which uses Cuda. (this is the origin of my confusion here)

But ignoring those politics, personally i think the question 'Why no more PhysX hardware acceleration?' has to be asked considering some points that did not exist years ago:

1. With low level APIs and async compute you want to distribute compute work along the rendering at times where there is little conflict and best performance. (E.g. the usual proposal: Do async compute while rendering shadow maps, pair ALU vs. bandwidth heavy workloads, etc.)

As long as PhysX runs on Cuda, this option is not present because graphics API and Cuda have their different mechanisms to dispatch and shedule work to GPU. (Personal assumption! I have never used Cuda.)

To fix this, NV would need to port PhysX to multiple APIs, at least DX12 and VK. And the user would need to know a bit more about PhysX internals which makes it harder to use.

Maybe NV plans to do this next - i don't think they still have much profit from keeping GPU PhysX vendor locked, and if the user is just Unity and Epic the engine extra work would pay out.

2. The physics effects interesting for GPU (particles, cloth, soft bodies, foliage) are easy to implement in comparison to constrained rigid body dynamics. Also the geometry is already on GPU for rendering and can be eventually reused, but in a non standard format across various engines.

So if you are a big AAA developer and have the manpower, you likely get the best solution for those things with custom tech. This solution is then 'hardware accelerated' too (no matter how we define this term, there never was dedicated physics hardware on NV GPUs).

This point also applies to destruction debris which might not need accurate collision detection and solver.

3. Most people agree GPU physics are not worth it in the average case. It's surely nice to enhance PC games, but it's mostly eye candy so optional. And with GPU being mostly the bottleneck it's not that attractive.

(I'm curious how next gen might change this. Fluid is really expensive, but smoke combined with some volumetric lighting could drop some jaws at acceptable costs.)

Now with fine grained sheduling on GPU introduced with raytracing, i guess that running all physics on GPU could be done much more efficiently.

Before that GPU was attractive to accelerate the solver, but polyhedra and analytical shapes collision detection seemed too complex and divergent.

So in theory NV could make a more powerful PhysX for RTX cards i guess, utilizing those options that are not exposed to graphics APIs, also the BVH. (pure speculation

But even then (and ignoring the vendor lock), all those CPU cores need some work too, and they are well suited for physics. So i think physics will remain mostly a CPU task for good reasons.

DavidGraham

Veteran

That wasn't really NVIDIA's fault, NVIDIA merely purchased the middleware, the developers of it took their time optimizing it according to their labor capacity and surrounding conditions.Dont know about now but nvidia had a bad reputation for not doing any optimisation for cpu phsx

eg: it was using x87 code

They already did this, PhysX uses Async Compute and now works on DX12 and Vulkan too (Quake RTX was about to use it).To fix this, NV would need to port PhysX to multiple APIs, at least DX12 and VK.

According to ExtremeTech,Maybe the GPU stuff is now all part of Apex? Apex has features that are interesting for GPU, but also it seems more of a framework to make integration easier, so i don't think so. (https://www.nvidia.com/object/apex.html)

So either i'm just unable to find the GPU sources, or they are still closed source and provided only by a dll which uses Cuda. (this is the origin of my confusion here)

The APEX SDK is not needed to build either the PhysX SDK nor the demo and has been deprecated. It is provided for continued support of existing applications only… The APEX SDK distribution contains pre-built binaries supporting GPU acceleration. Re-building the APEX SDK removes support for GPU acceleration.

https://www.extremetech.com/computing/281697-nvidia-open-sources-physx-with-a-few-caveats-attachedThe SDK is open source. The ability to run it on a GPU, on the other hand, is not. It’s not clear how much this actually matters, since the functions have been deprecated or moved to alternate sources. Regardless, it comes off badly, reading more like Nvidia thumbing its nose at stupid gamers who poke their fingers into areas where they shouldn’t be.

Ike Turner

Veteran

Both UE & Unity are replacing PhysX (which is currently the default physics engine) with their own physics solution:

Unreal Engine Chaos

Unity physics + Havok

Unreal Engine Chaos

Unity physics + Havok

Any sources on this?They already did this, PhysX uses Async Compute and now works on DX12 and Vulkan too (Quake RTX was about to use it).

While searching, i found this: https://www.tweakpc.de/news/43366/nvidia-physx-gescheitert-quellcode-ab-sofort-open-source/

They assume: "PhysX has failed, so NV has no more interest and dumps it to open source. Likely it has failed because of vendor lock."

What a bullshit

But maybe there is something like disappointment among gamers because they miss stuff seen in above PhysX videos and older games? That's a similar situation than seeing colliding foliage on older games but static one in newer games, leading to the 'lazy dev' prejudges.

Personally i think all this is more a shift in priorities. Larger and more detailed worlds don't come for free.

That said, personally i do not request pushing GPU physics. Instead i want robotics, and PhysX shows big improvements here:

This is really hard to do and proofs PhysX is under very active development. It's much more impressive than bruteforcing 100K particles on GPU, and it enables new ideas for games, maybe even new genres.

Like this for example:

These guys have true fun while playing a game! I miss this feeling

Now imagine the enemies behave naturaly, and they are not dumb and limited to offline animation data.

You need very good physics simulation for that. PhysX is late to the party here (personally i switched from Havok to Newton because it could do this robotics already 10 years ago), but they work on it.

Much more promising than GPU acceleration! (never can have enough GPU power for graphics alone

Not exactly new, that was released alongside PolarisLooks like AMD have a new audio api TrueAudio Next

DavidGraham

Veteran

Any sources on this?

The entire GameWorks library was ported to DX12, including PhysX, FleX, Flow .. etc. Some DX12 games also came with PhysX, such as Metro Exodus.

https://developer.nvidia.com/gameworks-dx12-released-gdc

As for Async compute, it was added to PhysX as a part of the DX12 porting process, they even released a small benchmark with it.

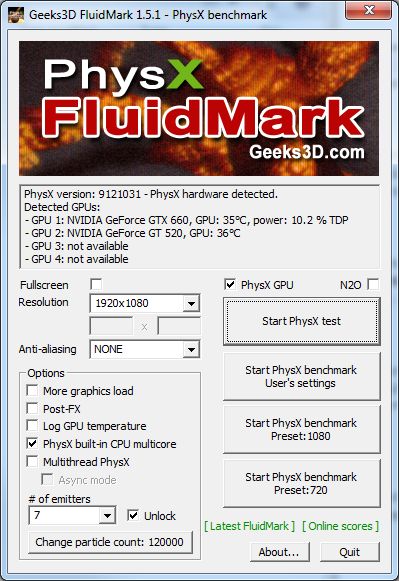

The test is PhysX FluidMark, pay attention to the Async mode under the Multi Threaded PhysX

Alessio1989

Regular

Did they changed that part of the license stating you cannot change the GPU implementation? On physx that prevent AMD to run hardware acceleration on it with it's own implementation, on most gameworkst it prevent developers to optimise them for AMD GPUs..They are exposed and run on the CPU. NVIDIA made the latest SDK open source too. So you can theoretically run them on AMD GPUs as well.

Those are GPU accelerated on NVIDIA GPUs.

Just chacked on Physx 4.1 and the gameworks binary license: source is still not provided nor license allow you to modify them...

a) prohibits the end user from modifying, reproducing, de-compiling, reverse engineering or translating the NVIDIA GameWorks SDK;

(a) modify, translate, decompile, bootleg, reverse engineer, disassemble, or extract the inner workings of any portion of the NVIDIA GameWorks SDK except the Sample Code, (b) copy the look-and-feel or functionality of any portion of the NVIDIA GameWorks SDK except the Sample Code;

This is open source like my ass, they copy the BSD license in the SDK folders and put them on github, however to obtain access to them, where binaries sources are not available, you have to sign-up with the nvidia developer account and accept their binary license: https://developer.nvidia.com/gameworks-sdk-eula

which is quite different for the gimmick SDK license: https://developer.nvidia.com/gameworks-source-sdk-eula

Last edited:

Similar threads

- Locked

- Replies

- 27

- Views

- 2K

- Replies

- 115

- Views

- 12K

- Replies

- 2

- Views

- 490