Pixels in general have gotten much more complex to render. Quad inefficiency when calculating complex lighting and filtering multiple shadow maps is not preferable. With async compute, deferring more is a better option than going back to forward rendering (heavy quad overdraw for every operation). Traditionally this kind of deferred rendering utilized GPUs poorly (simple pixel shader didn't utilize most resources at all), but async compute solves that problem. It of course adds some latency, but the amount of wasted work goes down a lot.

I am not disagreeing with the premise that AC helps in many situations. What I think is more nuanced is the way we would calculate the share of wall-clock time between the rasterization component and compute, and the difference between wall-clock time and utilization.

This does go back to my reference to Amdahl and the claim that rasterization's share of frame time is going down. Deferring in the front rasterization bucket adds latency, such that utilization is improved in the compute bucket. This means the serial portion (non-scalable with parallel resources) of the rasterization phase goes up, while the amount of redundant work in the compute portion goes down, potentially reducing its run-time (more if CU count rises).

Depending on the extent of the load on the raster phase, and how much winds up being culled/coalesced before getting the compute side, its share of execution time actually goes up even if the overall run-time is better than the alternative.

What splitting this across frames might do, as the parallel portion is whittled down, is leave the theoretical floor of the wall-clock time at ~2x the serial component. That may not be a common concern except for very small frame budgets or something particularly twitchy, so I agree it can be generally a satisfactory trade-off.

One additional complication, particularly with GCN, was statements made about of overly relying on the AC synthetic in the DX12 thread for measuring a graphics vendor's ability to get concurrency out of a command stream. Drivers and GPU hardware do run ahead and pipeline work quite some distance ahead--perhaps imperfectly--in a manner that explicitly pipelining graphics and asynchronous compute into two frames (asynchronous across a broad synchronization point?) is doing.

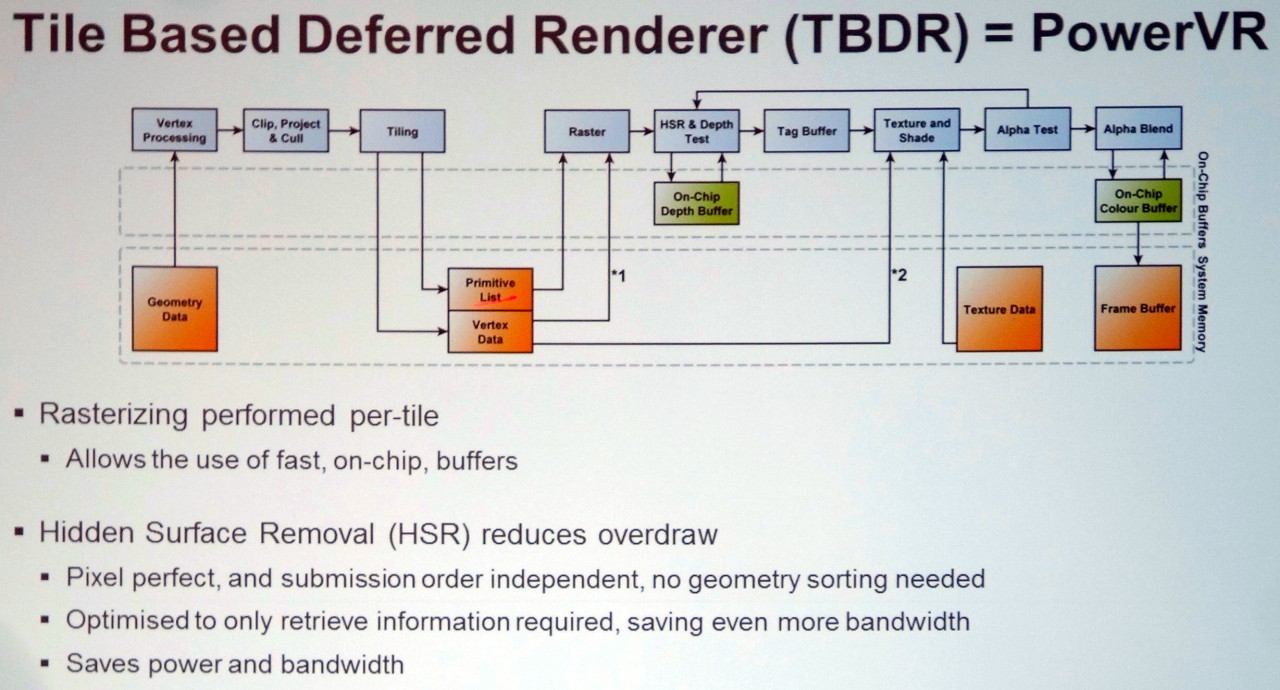

Perhaps certain GPUs that have coherent color pipelines, more aggressive drivers, and more flexible front ends are more effective in doing this, whereas an architecture with incoherent raster output, issues with inferior geometry throughput and culling, and issues with synchronization and driver bugs for deferred engines seems to benefit more (citation: AMD says Vega's coherent ROPs avoid the latter issue).

A driver or intelligent GPU might in the future optimize the pipelined case, by detecting a frame split like this and quietly reordering the second frame's work back into the first or eliding some stream-out and read-in as it sees fit.

If you are scared about the total pipeline latency, you can always split the screen to big macro tiles, and render them + light them all concurrently. This approaches forward rendering latency as the tile size gets smaller. You need to synchronize once before doing post processing (bloom, motion blur, DOF etc require neighbor pixels). This way you don't even need to overlap two frames as you find plenty of concurrent tasks inside a single frame. Overlapping two frames however would still likely bring some benefits.

This or some variant might be what AMD is hoping for with the "scalability" descriptor for Navi and the rumors of a multi-chip solution.

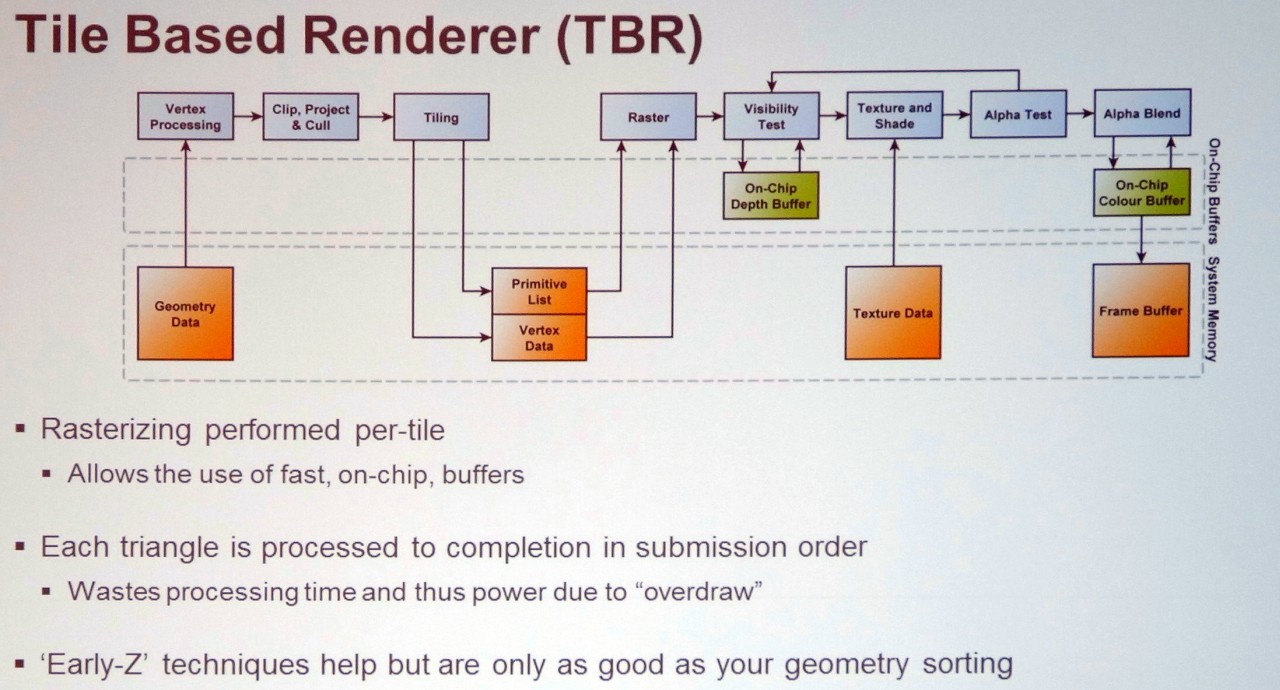

Would tiling and synchronization points fall under one of the two buckets in the wall-clock time calculation, or in another category? If this is somehow handled by a work distributor or a future version of Vega's binning logic, would it fall under the rasterization category?

To perhaps bring my digression more on-topic, a more fully deferred solution and traversal of acceleration structures could take a decent amount of time, but elide even more compute.

If something like the read and dirty bits for page table entries were extended to a graphics context, a an acceleration structure might be some kind of hierarchical buffer of samples whose inputs (rays, surfaces, pages) haven't changed since the last frame, and their values. In that case, the compute portion drops precipitously.