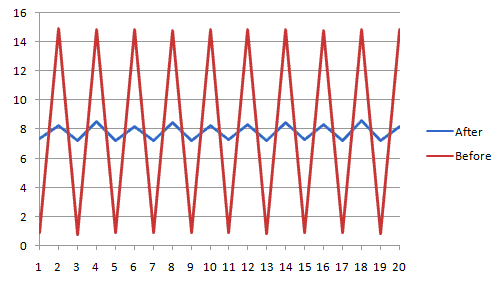

Since micro-stuttering is an issue for some people I thought it would be interesting to see if there's a way to solve it. Personally I'm not bothered by it and haven't actually been able to even see it, but I can prove it's happening with Fraps on my 3870x2. So I spent a couple of hours to implement a simple waiting mechanism to feed the GPUs at a more balanced rate. For best stability you want the second GPU to start working only once the first GPU is in mid-frame (assuming 2 GPUs). This is the result:

Now, since I'm not able to see the micro-stuttering in the first place I can't really say if it's any smoother in practice, but at least the Fraps graph looks good.

What I did was first to create a GPU limited test case using my InteriorMapping demo and slowing it down a bit by adding work to the shader until it ran at about 120fps. Micro-stuttering happens when you get GPU limited since you're feeding commands to the GPU faster than it can accept them. The more GPU limited you are the worse the problem is because the GPUs will start working on their frames shortly after each other and then after crunching through the frames both finish almost at the same time too, which makes one frame appear for 1ms and the next 15ms instead of both 8ms, as shown in the graph.

So I inserted an event query at the end of each frame. I use two queries, one for each GPU. Then at the end of the frame it syncs on its event query and counts how long it has to wait until this GPU's previous frame is complete. Then I sum up all the wait that was necessary and simply distribute it equally over the next two frames, which puts the frames better interleaved on the GPU. The best thing, the framerate did not change because of this.

I think something like this should be added to the driver with a simple checkbox in CCC to enable it. It can probably also be made more accurate than my simple prototype.

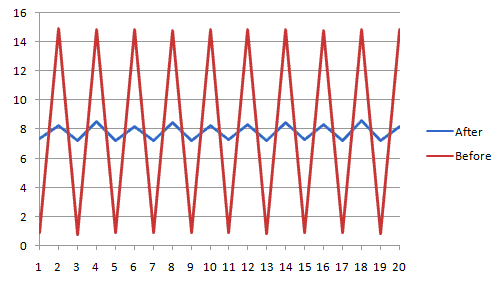

Now, since I'm not able to see the micro-stuttering in the first place I can't really say if it's any smoother in practice, but at least the Fraps graph looks good.

What I did was first to create a GPU limited test case using my InteriorMapping demo and slowing it down a bit by adding work to the shader until it ran at about 120fps. Micro-stuttering happens when you get GPU limited since you're feeding commands to the GPU faster than it can accept them. The more GPU limited you are the worse the problem is because the GPUs will start working on their frames shortly after each other and then after crunching through the frames both finish almost at the same time too, which makes one frame appear for 1ms and the next 15ms instead of both 8ms, as shown in the graph.

So I inserted an event query at the end of each frame. I use two queries, one for each GPU. Then at the end of the frame it syncs on its event query and counts how long it has to wait until this GPU's previous frame is complete. Then I sum up all the wait that was necessary and simply distribute it equally over the next two frames, which puts the frames better interleaved on the GPU. The best thing, the framerate did not change because of this.

I think something like this should be added to the driver with a simple checkbox in CCC to enable it. It can probably also be made more accurate than my simple prototype.