Any technical details leak about this? Sounds like it's optimized to work on very low-end hardware, but curious to know if there's anything novel in terms of how it's done that might lead to quality differences with competing solutions like TSR. I haven't been able to find anything.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Unity 6 - Spatial-temporal post-processing (STP)

- Thread starter Scott_Arm

- Start date

Frenetic Pony

Veteran

I'm guessing by the name it's the idea that you can use variable rate shading for post processing. Instead of post processing at full rate after upscaling and TAA, you can throw blue noise (a fancy term for randomness designed such that the human eye can't see it) and post process at like 1/4th rate for areas of the screen that haven't changed a lot since last frame. Then you do TAA that includes post processing from last frame and blend the two, instead of doing post processing after like normal.

The result should be relatively invisible cost savings on post processing.

The result should be relatively invisible cost savings on post processing.

From the keynote it looks like it's only upscaling the post-process stage. They "crank up the post process effects" and you see the fps tank and looking at the on-screen visuals the depth-of-field definitely kicks up a notch. When they turn on STP the framerate recovers and there's an on-screen scaling factor of 65%.

www.youtube.com

www.youtube.com

It looks like there's a significant list of things that are offered by Unity as post-processing effects:

docs.unity3d.com

docs.unity3d.com

Most games upscale before post-processing and then do post-processing at display resolution, or at least that's the ideal way the upscalings are intended to work. I wonder what the logic is here? Is post-processing typically a large portion of frame time? I would have expected no, but maybe I'm wrong.

Unite 2023 Keynote

Our popular Unite conference for creators is back, live and in person in Amsterdam.Watch our exciting Keynote – featuring world-exclusive announcements, top ...

It looks like there's a significant list of things that are offered by Unity as post-processing effects:

Unity - Manual: Post-processing and full-screen effects

| Ambient Occlusion |

| Anti-aliasing |

| Auto Exposure |

| Bloom |

| Channel Mixer |

| Chromatic Aberration |

| Color Adjustments |

| Color Curves |

| Fog |

| Depth of Field |

| Grain |

| Lens Distortion |

| Lift, Gamma, Gain |

| Motion Blur |

| Panini Projection |

| Screen Space Reflection |

| Shadows Midtones Highlights |

| Split Toning |

| Tonemapping |

| Vignette |

| White Balance |

Most games upscale before post-processing and then do post-processing at display resolution, or at least that's the ideal way the upscalings are intended to work. I wonder what the logic is here? Is post-processing typically a large portion of frame time? I would have expected no, but maybe I'm wrong.

Frenetic Pony

Veteran

Usually no, post processing isn't a huge cost. But since upscaling it is still a performance bump then hey why not, especially if there isn't any particular harm to image quality.Most games upscale before post-processing and then do post-processing at display resolution, or at least that's the ideal way the upscalings are intended to work. I wonder what the logic is here? Is post-processing typically a large portion of frame time? I would have expected no, but maybe I'm wrong.

While there still aren't many details available, the source code is up on the Unity graphics GitHub if anyone wants to dive in:

github.com

github.com

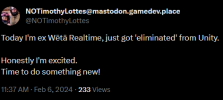

This was spotted by Aras:

mastodon.gamedev.place

mastodon.gamedev.place

Graphics/Packages/com.unity.render-pipelines.core/Runtime/STP at 27341ce4f5c8853d7f15e9420bff499f1087aceb · Unity-Technologies/Graphics

Unity Graphics - Including Scriptable Render Pipeline - Unity-Technologies/Graphics

This was spotted by Aras:

Aras Pranckevičius (@aras@mastodon.gamedev.place)

Looks like Unity Graphics github repo already contains Timothy Lottes new "STP" upscaler https://github.com/Unity-Technologies/Graphics/tree/27341ce/Packages/com.unity.render-pipelines.core/Runtime/STP

Frenetic Pony

Veteran

While there still aren't many details available, the source code is up on the Unity graphics GitHub if anyone wants to dive in:

Graphics/Packages/com.unity.render-pipelines.core/Runtime/STP at 27341ce4f5c8853d7f15e9420bff499f1087aceb · Unity-Technologies/Graphics

Unity Graphics - Including Scriptable Render Pipeline - Unity-Technologies/Graphicsgithub.com

This was spotted by Aras:

Aras Pranckevičius (@aras@mastodon.gamedev.place)

Looks like Unity Graphics github repo already contains Timothy Lottes new "STP" upscaler https://github.com/Unity-Technologies/Graphics/tree/27341ce/Packages/com.unity.render-pipelines.core/Runtime/STPmastodon.gamedev.place

So that's what Lottes was doing for a while, I'd seen the tweet from him but it was as so archaic I didn't know what to make of it. I wonder when he'll talk or blog about this thing, a new upscaler is often an interesting talk and being able to do post processing at the same time is neat.

Looks like it handles dynamic resolution and can compensate for inconsistent frame times when using motion vectors. It looks fairly complicated. Not 100% what the sections about "dilation" are referring to. Is it just elapsed time? Seems like it upscales using blue noise texture to randomly sample history and converge to a stable image? Uses TAA, so it's like TAA with blue noise sample selection or something?

Edit: I guess randomly sample is the wrong term. The noise is not random, and the blue noise textures are probably precomputed. With these blue noise techniques, do you use a different blue noise texture each frame, or the same texture? Like, the noise produces dithering frame to frame, which reduces noise? That necessitates a new noise mask each frame, no? Or do you use the same blue noise mask and then remove it?

/// Blue noise texture used in various parts of the upscaling logic

/// Input color texture to be upscaled

/// Input depth texture which will be analyzed during upscaling

/// Input motion vector texture which is used to reproject information across frames

/// [Optional] Input stencil texture which is used to identify pixels that need special treatment such as particles or in-game screens

/// [Optional] Output debug view texture which STP can be configured to render debug visualizations into

/// Output color texture which will receive the final upscaled color result

/// Input history context to use when executing STP

/// Set to true if hardware dynamic resolution scaling is currently active

/// Set to true if the rendering environment is using 2d array textures (usually due to XR)

/// Set to true to enable the motion scaling feature which attempts to compensate for variable frame timing when working with motion vectors

/// Distance to the camera's near plane

/// Used to encode depth values

/// Distance to the camera's far plane

/// Used to encode depth values

/// Index of the current frame

/// Used to calculate jitter pattern

/// True if the current frame has valid history information

/// Used to prevent STP from producing invalid data

/// A mask value applied that determines which stencil bit is associated with the responsive feature

/// Used to prevent STP from producing incorrect values on transparent pixels

/// Set to 0 if no stencil data is present

/// An index value that indicates which debug visualization to render in the debug view

/// This value is only used when a valid debug view handle is provided

/// Delta frame time for the current frame

/// Used to compensate for inconsistent frame timings when working with motion vectors

/// Delta frame time for the previous frame

/// Used to compensate for inconsistent frame timings when working with motion vectors

/// Size of the current viewport in pixels

/// Used to calculate image coordinate scaling factors

/// Size of the previous viewport in pixels

/// Used to calculate image coordinate scaling factors

/// Size of the upscaled output image in pixels

/// Used to calculate image coordinate scaling factors

/// Number of active views in the perViewConfigs array

/// Configuration parameters that are unique per rendered view

/// Enumeration of unique types of history textures

DepthMotion,

Luma,

Convergence,

Feedback,

Edit: I'm guessing it has some similarity to this? https://research.nvidia.com/publication/2022-07_spatiotemporal-blue-noise-masks

Spatiotemporal blue noise is different than using multiple blue noise textures? Basically if you use a series of blue noise textures each frame they'd converge temporally towards random noise or white noise, but spatiotemporal blue noise is blue noise spatially but converges towards no noise temporally. Or something.

Interesting. In this nvidia video they mention the problem of moving pixels like TAA. Basically if a pixel moves it's position on screen (samples from history moved with motion vectors) the noise will become white noise because it disobeys the convergence of the spatiotemporal blue noise. So that's a problem this STP algorithm would have to solve.

Edit: I guess randomly sample is the wrong term. The noise is not random, and the blue noise textures are probably precomputed. With these blue noise techniques, do you use a different blue noise texture each frame, or the same texture? Like, the noise produces dithering frame to frame, which reduces noise? That necessitates a new noise mask each frame, no? Or do you use the same blue noise mask and then remove it?

/// Blue noise texture used in various parts of the upscaling logic

/// Input color texture to be upscaled

/// Input depth texture which will be analyzed during upscaling

/// Input motion vector texture which is used to reproject information across frames

/// [Optional] Input stencil texture which is used to identify pixels that need special treatment such as particles or in-game screens

/// [Optional] Output debug view texture which STP can be configured to render debug visualizations into

/// Output color texture which will receive the final upscaled color result

/// Input history context to use when executing STP

/// Set to true if hardware dynamic resolution scaling is currently active

/// Set to true if the rendering environment is using 2d array textures (usually due to XR)

/// Set to true to enable the motion scaling feature which attempts to compensate for variable frame timing when working with motion vectors

/// Distance to the camera's near plane

/// Used to encode depth values

/// Distance to the camera's far plane

/// Used to encode depth values

/// Index of the current frame

/// Used to calculate jitter pattern

/// True if the current frame has valid history information

/// Used to prevent STP from producing invalid data

/// A mask value applied that determines which stencil bit is associated with the responsive feature

/// Used to prevent STP from producing incorrect values on transparent pixels

/// Set to 0 if no stencil data is present

/// An index value that indicates which debug visualization to render in the debug view

/// This value is only used when a valid debug view handle is provided

/// Delta frame time for the current frame

/// Used to compensate for inconsistent frame timings when working with motion vectors

/// Delta frame time for the previous frame

/// Used to compensate for inconsistent frame timings when working with motion vectors

/// Size of the current viewport in pixels

/// Used to calculate image coordinate scaling factors

/// Size of the previous viewport in pixels

/// Used to calculate image coordinate scaling factors

/// Size of the upscaled output image in pixels

/// Used to calculate image coordinate scaling factors

/// Number of active views in the perViewConfigs array

/// Configuration parameters that are unique per rendered view

/// Enumeration of unique types of history textures

DepthMotion,

Luma,

Convergence,

Feedback,

Edit: I'm guessing it has some similarity to this? https://research.nvidia.com/publication/2022-07_spatiotemporal-blue-noise-masks

Spatiotemporal blue noise is different than using multiple blue noise textures? Basically if you use a series of blue noise textures each frame they'd converge temporally towards random noise or white noise, but spatiotemporal blue noise is blue noise spatially but converges towards no noise temporally. Or something.

Interesting. In this nvidia video they mention the problem of moving pixels like TAA. Basically if a pixel moves it's position on screen (samples from history moved with motion vectors) the noise will become white noise because it disobeys the convergence of the spatiotemporal blue noise. So that's a problem this STP algorithm would have to solve.

Last edited:

Dilation is an old trick, typically used to "enlarge" motion vectors so that pixels would accumulate better on edges (as sampling of the low-res motion vectors will inevitably miss some pixels on the edges of the higher-resolution history buffer, so motion vectors have to be dilated to avoid this). This stuff is critical for a good quality of edges in motion with temporal reconstruction, but will result in the weird parallax-like effect on the edges.Not 100% what the sections about "dilation" are referring to

Last edited:

Frenetic Pony

Veteran

Looks like it handles dynamic resolution and can compensate for inconsistent frame times when using motion vectors. It looks fairly complicated. Not 100% what the sections about "dilation" are referring to. Is it just elapsed time? Seems like it upscales using blue noise texture to randomly sample history and converge to a stable image? Uses TAA, so it's like TAA with blue noise sample selection or something?

Edit: I guess randomly sample is the wrong term. The noise is not random, and the blue noise textures are probably precomputed. With these blue noise techniques, do you use a different blue noise texture each frame, or the same texture? Like, the noise produces dithering frame to frame, which reduces noise? That necessitates a new noise mask each frame, no? Or do you use the same blue noise mask and then remove it?

Edit: I'm guessing it has some similarity to this? https://research.nvidia.com/publication/2022-07_spatiotemporal-blue-noise-masks

Spatiotemporal blue noise is different than using multiple blue noise textures? Basically if you use a series of blue noise textures each frame they'd converge temporally towards random noise or white noise, but spatiotemporal blue noise is blue noise spatially but converges towards no noise temporally. Or something.

Interesting. In this nvidia video they mention the problem of moving pixels like TAA. Basically if a pixel moves it's position on screen (samples from history moved with motion vectors) the noise will become white noise because it disobeys the convergence of the spatiotemporal blue noise. So that's a problem this STP algorithm would have to solve.

Spatiotemporal blue noise is...

Well, "blue noise" is a family of "quasirandom" noise/sequences. The idea being that you both evenly distribute samples while being as random as possible. Humans are good at patterns, so you break up the patterns as much as possible. Guassian (random) noise tends to have large clusters and voids, it's entirely random so a pixel being black right next to another pixel being black is just as likely as it being white (if you're just doing black/white). While blue noise tries to separate black and white pixels as much as possible, without introducing any obvious patterns either.

Over two dimensions that's easy to imagine, "spatiotemporal" is doing that over three. So if a pixel was white the previous frame there's a greater chance it's black, etc. This is mathematically a bit complicated, so the sequence is precalculated then each frame goes through a new "blue noise" texture in a loop of them. The end result is, you can have less samples for a lot of things like casting less rays for whatever raytracing you're doing, or less rays for raymarching into fog, or here doing something like bloom at lower than full resolution, and when combined with TAA it will look like it has less aliasing than undersampling normally does, to human eyes anyway. It won't be more correct from a computer's perspective, but we're building for humans and not computers, so after a bunch of maths it's a cheap way to make things look to humans better than they actually are and saving rendering time thereby.

Screen captured because I don't know if "tweets" show up for people that aren't logged in anymore.

Timothy Lottes suggests STP drops the cost of TAA in half and then moves post-processing before TAA (I'm not sure if he's also using TAA to refer to upscaling here). He's suggesting that rendering at 1080p and upscaling to 4k should be roughly 4x faster than rendering at 4k native, but it's generally more like 2x because of things like doing post-processing after the upscale and the upscaling itself being relatively expensive. It sounds like STP could be very fast relative to other upscalers, and with high quality upscale of post-processing, which will push closer to that 4x performance we're looking for.

He says scaling TAA doing RT denoise might be a 4x cost amplifier, but the wording of that is confusing to me. Is he saying integrating the denoise in the upscale would save and get closer to that 4x performance scaling we want?

Wonder what he thinks of visibility buffers/shading? The suggestion here is that deferred shading is technical debt, I think.

Edit:

It's also very clear the direction here is to get 1080p->4k type upscales. The bigger the upscale, the more benefit you get from moving post-processing before the upscale step. Also his comment about getting geometry to scale is interesting. One thing I've found from playing UE5 games with nanite is that they upscale nicer, at least in my opinion they do. I think it's because you have roughly one triangle per pixel rendered, instead of pixels that were shaded from large triangles that already covered many pixels. His overall direction seems to be render low resolution with geometry that scales per pixel, and then have a fast quality upscaler at the very end. Nothing surprising there, just seems to suggest there are barriers to working in that direction in terms of technical debt. Not sure what pipeline dependent passes means here, unless he's just referring to having passes in the TAA upscaler that handles post processing differently than something like material shading.

Last edited:

Is that him though?Timothy Lottes

There is a bunch of weird passes in these tweets which look like shifting some "blame" to PC developers (who's that? can he name a technically complex PC only game?) with which I completely disagree - if I'm reading this correctly. In what world would previous generation consoles (they hit 4K) be able to do 120 fps?The suggestion here is that deferred shading is technical debt, I think.

Frenetic Pony

Veteran

There is a bunch of weird passes in these tweets which look like shifting some "blame" to PC developers (who's that? can he name a technically complex PC only game?) with which I completely disagree - if I'm reading this correctly. In what world would previous generation consoles (they hit 4K) be able to do 120 fps?

Yeah, his tweet storms have become kind of arcane and over the top. Like we couldn't output 120hz, and output it to what? TAA upscaling isn't some magic, even if you get to per pixel scaling costs like he wants you're still aliasing in space and time. My favorite example of how bad this can get with ever more detail is Forbidden West on the PS4. You can clearly see the art is designed for higher resolutions even with a TAA filter in place, with geometry aliasing and popping all over the place:

Meanwhile the most impressive trailer I've yet seen for a game is that Fable teaser, they're concentrating on variable rate shading, trying to save where it's invisible, and thus get as much AA as possible on as much detail as possible:

Holy shit ... the visibility buffer is over 10 years old. I think the visibility buffer has had its day, better to jump straight to full raytracing now (which is not to say the samples shouldn't have a lot of the same data as was stored in the visibility buffer, but for reprojection, not for shading the fresh samples).Wonder what he thinks of visibility buffers/shading? The suggestion here is that deferred shading is technical debt, I think.

Visibility buffers/deferred texturing are somewhat orthogonal to G-buffers/deferred lighting. You can combine visibility buffers with forward rendering as shown in the original Intel paper ...Wonder what he thinks of visibility buffers/shading? The suggestion here is that deferred shading is technical debt, I think.

Deferred rendering prevailed over forward rendering in high-end graphics simply because the performance of register spilling to memory became unacceptable with the rise of shader graphs and some advanced screen space graphical effects such as SSR and subsurface scattering couldn't be done with pure forward rendering. Lumen itself is incompatible with Unreal Engine's forward rendering path since it hard requires a G-buffer!

Is that him though?

There is a bunch of weird passes in these tweets which look like shifting some "blame" to PC developers (who's that? can he name a technically complex PC only game?) with which I completely disagree - if I'm reading this correctly. In what world would previous generation consoles (they hit 4K) be able to do 120 fps?

It's him. I wish he'd write somewhere longer form. I think his point about PC is graphics hardware carries architectural baggage because of it's need to be backwards compatible ("HW vendors hands are tied, they mostly accelerate existing software"). He has a previous tweet thread about numa architectures being inevitable. It looks like he believes we're locked into a cycle that's not scaling well because HW vendors have to scale existing software to be be able to sell. Someone has to move first and it looks like he's pointing more to the software side to move first. Again, "twitter" is a horrible place for long-form content. It does sound like he's going to start blogging again in 2014.

If I were to guess the "blame" goes to pc developers because it's the platform that's locked into scaling old software, more so than console where backwards compatibility is more recent. Essentially a new architecture could probably succeed more easily in the console space because there hasn't historically been the same expectation for backwards compatibility, but I'm only guessing that's what he means.

I think what he's suggested in the post above is that if they'd moved away from current pc architecture there was an opportunity in the same timeframe to get to 4k 120 "restoration", but I don't know what restoration means in this context.

Well consoles are "locked" into "scaling old software" way more than PCs as it stands.If I were to guess the "blame" goes to pc developers because it's the platform that's locked into scaling old software, more so than console where backwards compatibility is more recent. Essentially a new architecture could probably succeed more easily in the console space because there hasn't historically been the same expectation for backwards compatibility, but I'm only guessing that's what he means.

Nothing stops you from saving G-buffer information in a forward renderer. Nothing stops you from accumulating lighting data in the forward pass with a deferred shading. With MRT you can use MSAA and a G-buffer without full MS amplification of the G-buffer.Deferred rendering prevailed over forward rendering in high-end graphics simply because the performance of register spilling to memory became unacceptable with the rise of shader graphs and some advanced screen space graphical effects such as SSR and subsurface scattering couldn't be done with pure forward rendering. Lumen itself is incompatible with Unreal Engine's forward rendering path since it hard requires a G-buffer!

The absolute separation between forward+MSAA/deferred+G-buffer was always a silly simplification by people who didn't really want to explain their reasoning.

Well consoles are "locked" into "scaling old software" way more than PCs as it stands.

Now they are but they weren’t

Sure but many renderers won't go there in practice for a multitude of reasons. Combining a G-buffer with a forward renderer means losing out on MSAA and transparency. Unifying nearly all of the rendering passes in a deferred renderer also means that you can't save on overdraw costs without doing another geometry pass (depth/visibility prepass) and having a mostly unified rendering pass will drop GPU occupancy due to the high register pressure/spilling from the combined rendering passes ...Nothing stops you from saving G-buffer information in a forward renderer. Nothing stops you from accumulating lighting data in the forward pass with a deferred shading. With MRT you can use MSAA and a G-buffer without full MS amplification of the G-buffer.

If it's the choice between the evil of either having a G-buffer vs efficiently implementing memory spilling in hardware, no doubt many vendors would prefer not to have the latter around. They figure that if developers are still going to use a G-buffer, there's a much bigger payoff to be had in optimizing the hardware in mind for a fully deferred renderer. Going for the middle ground will just overcomplicate hardware design (spilling) with no benefit (MSAA) to boot ...

As for the last statement, it may have been viable in the past to employ some sort of heuristic (multiple fragments/depth discontinuities/etc) or edge detection algorithm to handle MSAA pixels but with Nanite we can't rely on such clever methods to skip much of the work anymore ...

Think of the distinction as a "multimodal" distribution where some configurations have more optimal peaks than the others ...The absolute separation between forward+MSAA/deferred+G-buffer was always a silly simplification by people who didn't really want to explain their reasoning.

Legacy software doesn't really have have to be an issue from a hardware design perspective if the forces at play have enough foresight to avoid standardizing bad features (tessellation/state objects/etc) to implement them in first place. Sometimes hardware designs age well enough to simply iterate upon them ...Now they are but they weren’t

Last edited:

Similar threads

- Replies

- 2

- Views

- 668

- Replies

- 21

- Views

- 6K

- Replies

- 14

- Views

- 2K

- Replies

- 90

- Views

- 13K